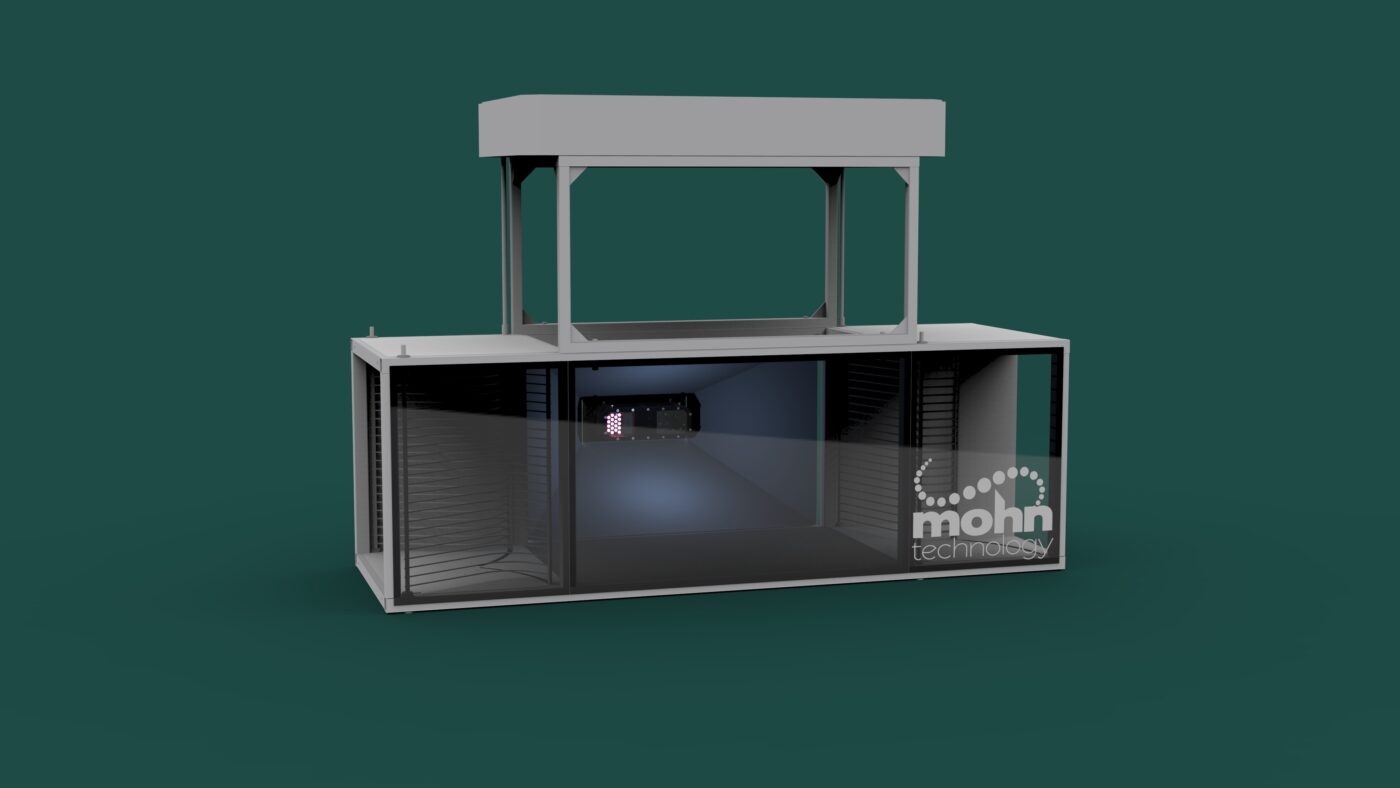

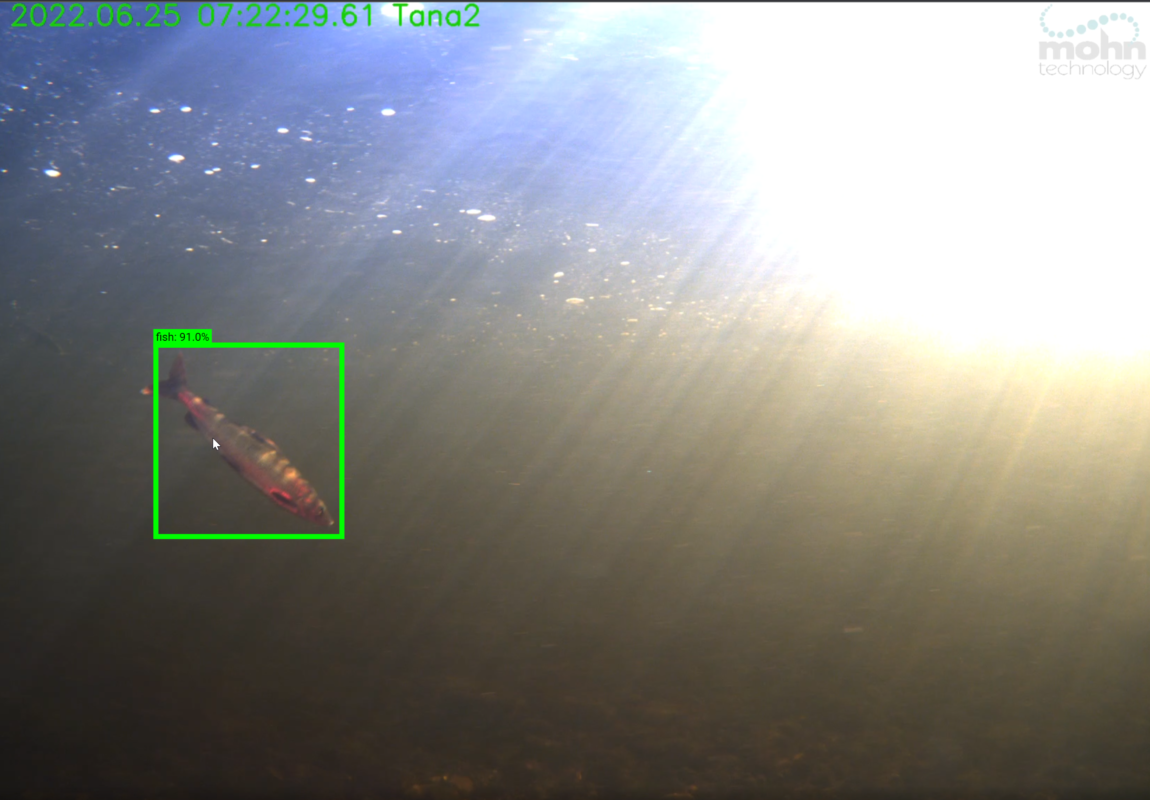

Mohn Technology is developing an AI based inspection drone for the aquaculure industry. The drone, which is called the Sentinel Inspection Drone. The project is funded by FHF – Norwegian Seafood Research Fund.

Mohn Technology has long and broad experience with both AI based and conventional machine vision. We combine that experience with knowledge about underwater camera systems and robotics to create a tool that will aid fish farmers in inspecting their facilities.

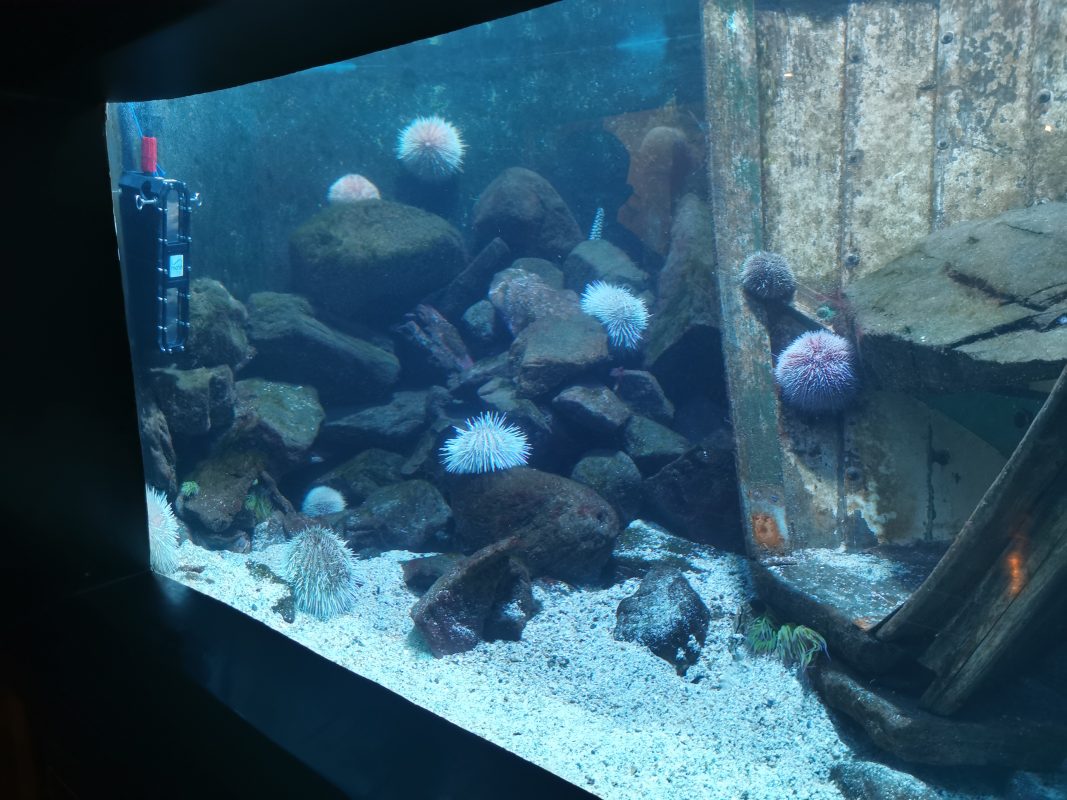

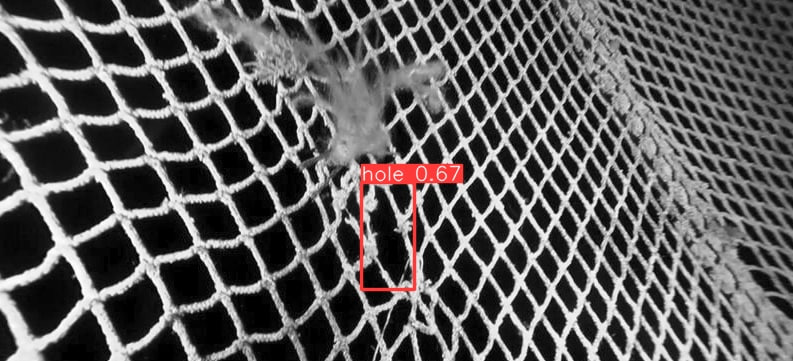

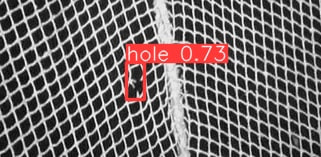

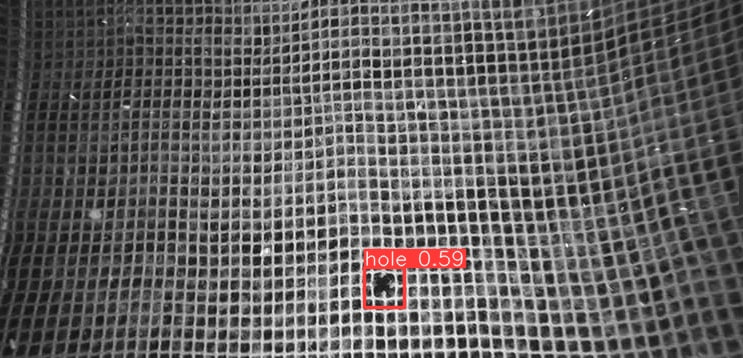

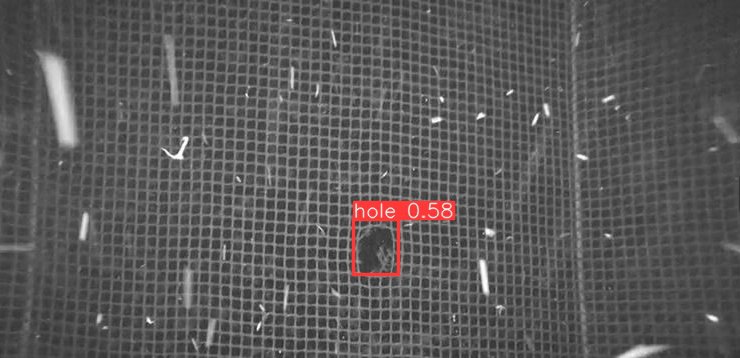

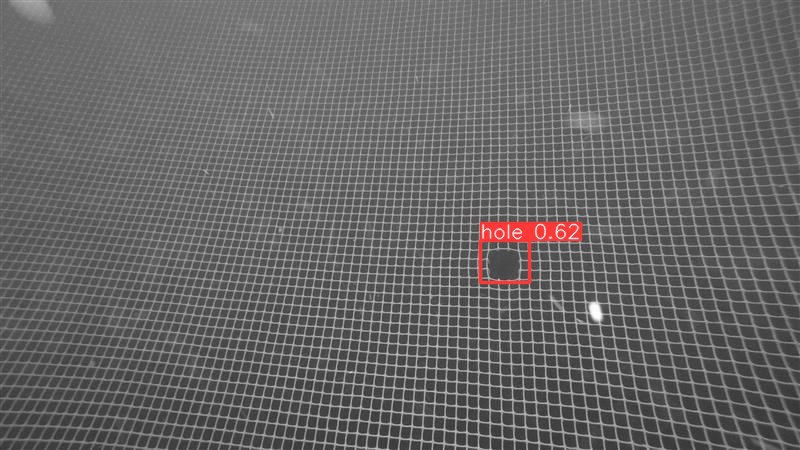

We have been working on various approaches on a sensitive, yet accurate way of detecting holes. The net pen is not just a net, it has ropes, knots, fouling, attached equipment etc. During the project we started with more conventional machine vision algorithms that look for holes with larger areas than the average. This works well for a clean and orderly net without foreign objects, equipment or ropes, but unfortunately that is not the case in reality. We have therefore developed machine vision algorithms that use artificial intelligence (AI) to seek out, track and report the holes.

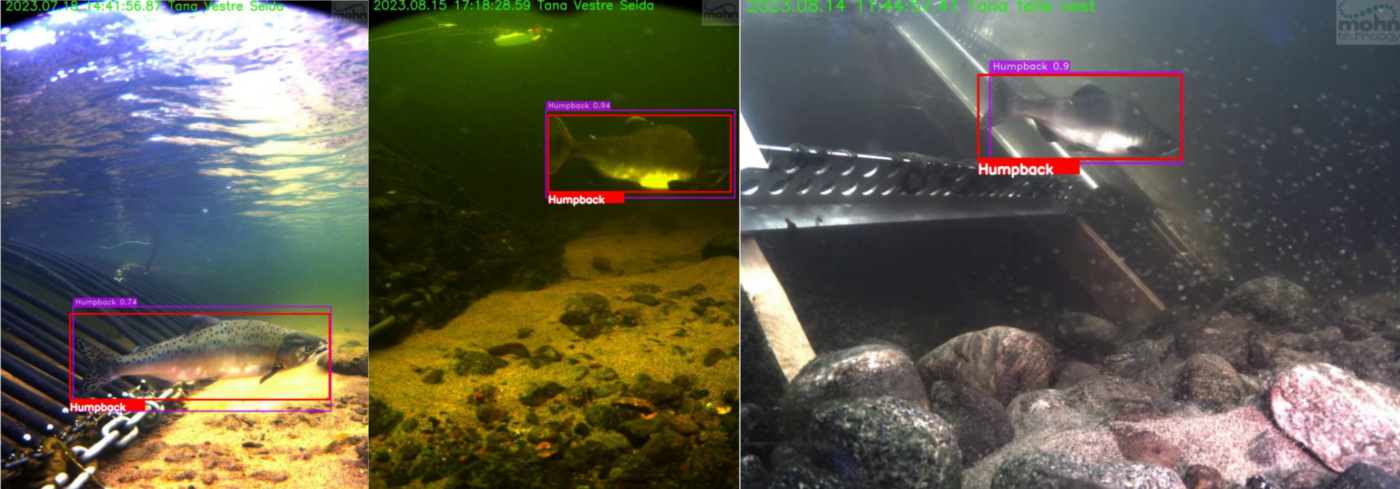

Mohn Technology recently received a set of images of holes discovered in Norwegian facilities during operation. The holes were not big, but they gave us a realistic test of how holes would look in an actual facility. Until now, we had only tested with simulations, pool tests and scale models.

The images above are examples taken from real inspections where holes are found. All holes were discovered.

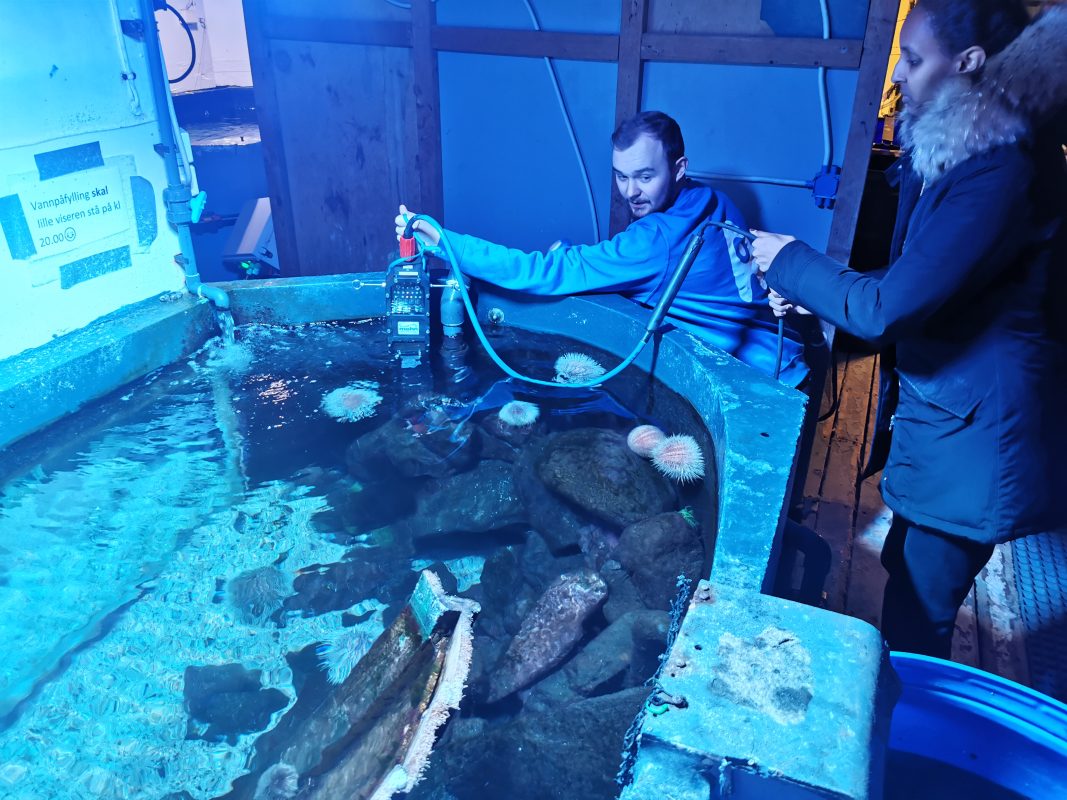

The video above shows hole detection and tracking algorithms on a video stream taken from a pool test. Note that this is a preliminary real time algorithm, and drone positioning data is not available for the tracking algorithm during this test, so the hole ID is lost once the hole tracking is lost momentary. During operation, the operator would get a notification if holes are detected, and the damage could be inspected manually. The net used is real aquaculture net pen received from a partner.